2084: AI Generated Images with Control

A new paper shows a method by which you can control what and where is generated by a diffusional model

Diffusion Models are awesome. A sentence and you’re an Artiste, even if you could never draw anything bigger than a walnut without looking like a nut. But of course, for any serious work, you would want to exercise some control, control over where and what is placed in the image, control over the composition, in fact.

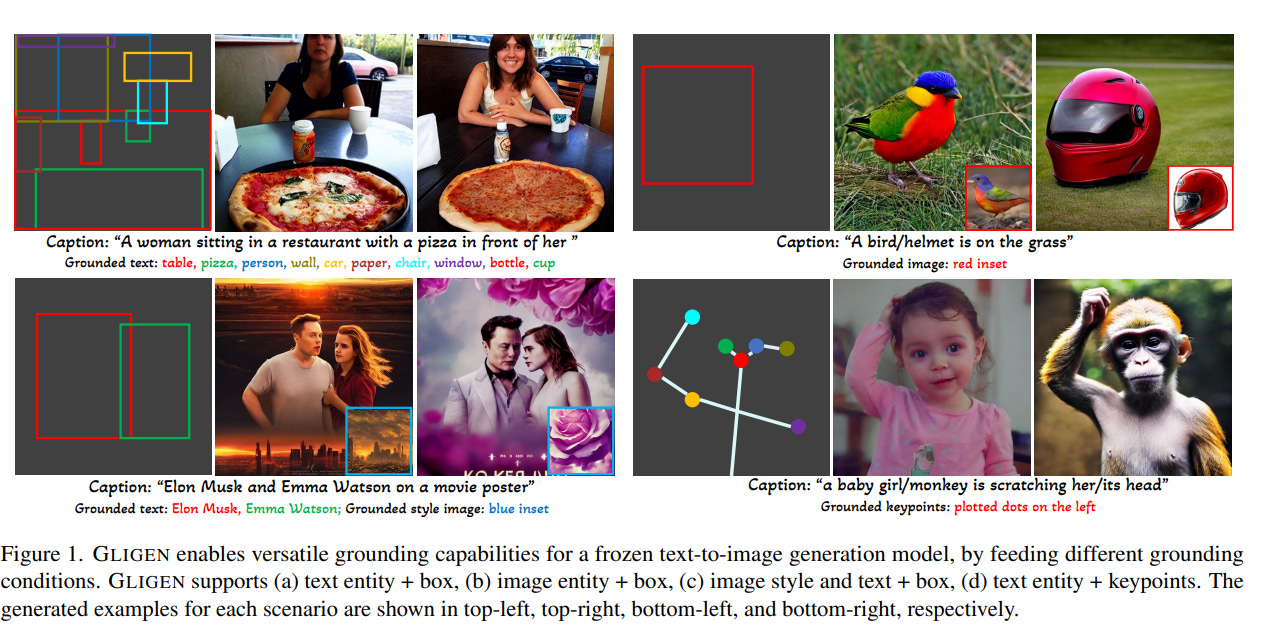

Enter GliGEN. GliGEN is a diffusion model that uses an annotated dataset, annotated with bounding boxes and descriptions, along with a gated transformer network(proper description in the paper), to allow you to control the output of a diffusion model precisely, allowing you to essentially control the composition of the output of the diffusion model. Interestingly enough, the way it’s been implemented is that it essentially leverages an existing models weights, and injects the gated transformer network into the model, while freezing the old weights, in essence leveraging new information in order to generate the more controlled images, which of course result in a much shorter training time. Now, if this can be used in conjunction with some other techniques for preserving style, this could be more than enough for commercial use, and more than useful, especially given the relatively short training time.

As it is, it’s already intuitive enough that I could easily create the following image of a teddy bear holding a gift, with the gift correctly positioned as well:

It can’t be long before this tech is implemented in a more powerful model like Midjourney. What a time to be alive!