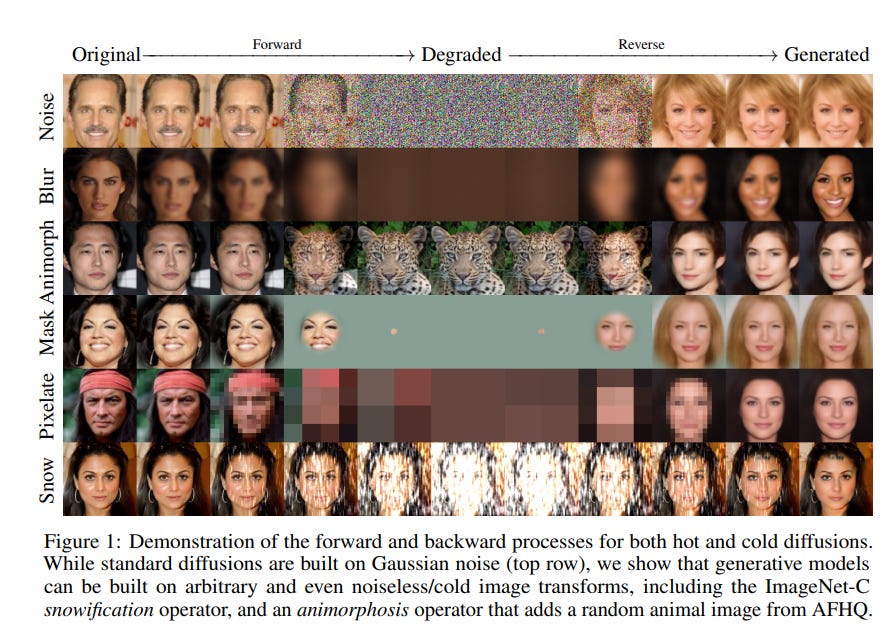

So I found this interesting paper recently in the lucid dreams repository, namely the Cold Diffusion paper. Basically, the paper indicates that it doesn’t matter in which way you deform the image you use in the diffusion models, you can still train a reconstructor to generate a new set of images. This is interesting in that it indicates that the neural networks must essentially be learning the loss of information itself, and that the Gaussian noise typically chosen are not special in the slightest besides their nice mathematical properties.

Of course as specified in the paper, the reconstruction operator needs to be modified a bit to produce an image which can be directly compared with the original, and there’s also a bit of mathematical trickery with the algorithm, but the point still stands that the exact process in which the information is lost is not necessary to specify.

Now this is important as it indicates that the diffusion models are much more general than expected. Imagine for example, a path generating diffusion model trained by masking away paths. Or a diffusion model that can undo camera artifacts and pixelization - we might finally have something that can do the CSI “Zoom and Enhance” in real life! In 2084, we might have diffusion models do just about everything, all just using the basic technique of iteratively reconstructing information.