2084: Sketch to CAD, Sketch to Pose, and Image to Sketch

New tools for changing sketches into useful data, and the other way around.

Stable Diffusion and other text to image AIs have( understandably, the technology is awesome), been stealing a lot of the headlines lately. However, beyond text to image, there’s also been a lot of advancements in more prosaic yet more useful segments of industry. Of course, not as flashy doesn’t mean not as important, as it is the the tech that goes deep that lasts, and it' all forms a part of the coming wave of AI that will be in everything.

For example, take CAD. If you’ve ever worked with a program like AutoCAD, you know that it has quite a steep learning curve, and that it takes a while to learn the commands necessary to transform your test sketch into reality.

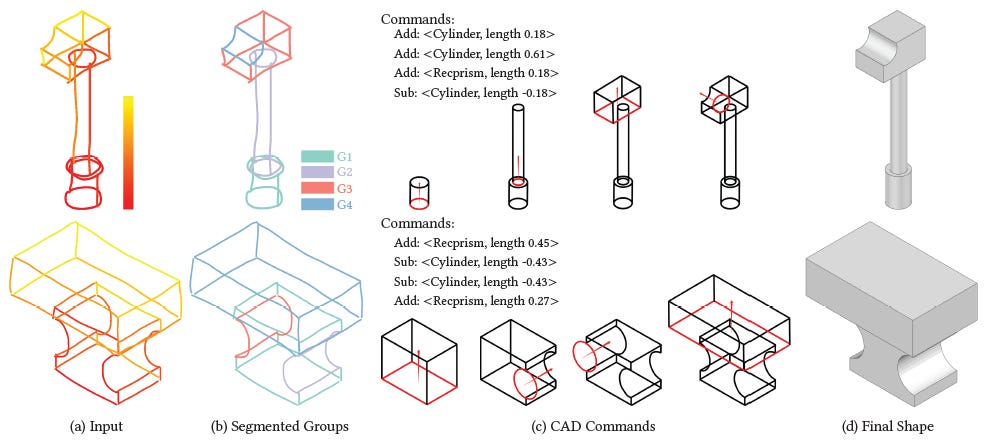

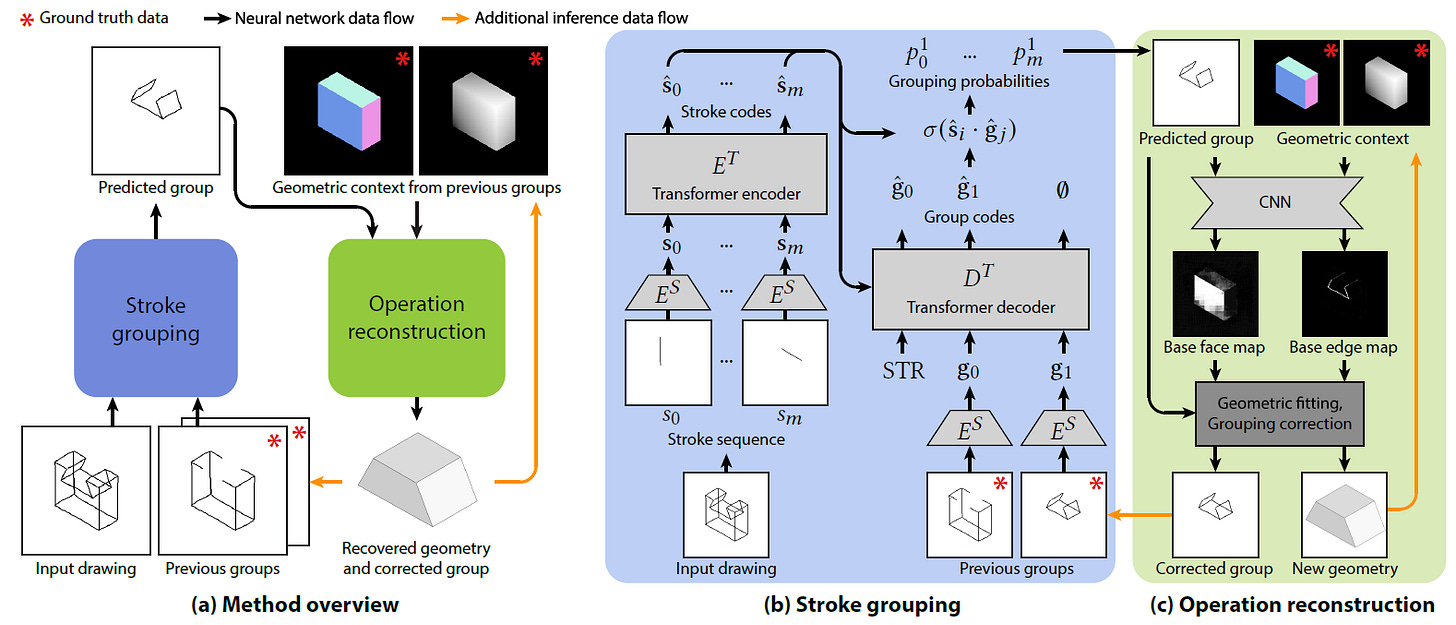

Now a team of computer scientists from UCL, Adobe, Microsoft, Adobe and UCA have created a tool, Free2CAD that takes in a freehand sketch, and turns it automatically into a sequence of CAD commands.

No more tedious fiddling with the controls, or scaling it too much or too little, now you can just give it a sketch, and it’ll generate it for you. This tech is freely available on a github, but doesn’t seem to be implemented in a website yet(could be a potential business idea there).

The basic idea is based on a Transformer architecture, like most of the interesting AI models out there today. Essentially they break apart a sketch into a collection of lines, run it through a transformer to predict what shapes the sketch consists of, and thus what commands to give, and then pass the commands through a U-Net that makes sure the shapes generated are in accordance with the existing geometry and the space’s geometry. It’s a very smart and rather simple architecture, and they even provided a nice animation to explain how it works on their project page

The videos on the page are well worth watching if only for how cool they are. I can imagine prototyping to be made much quicker if this tool is integrated into AutoCAD or something similar.

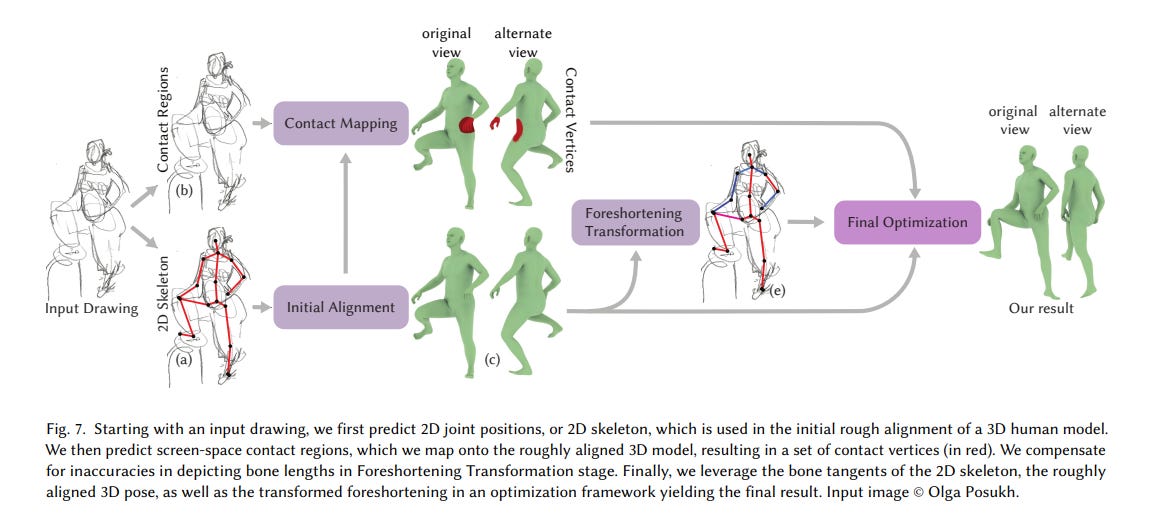

For another interesting sketch based tool, there’s also Sketch2Pose, developed by two computer scientists at the University of Montreal. They developed a tool which can take in a hand drawn sketch of a pose, and automagically map it into the correct 3D pose.

Essentially it uses a series of convolutional neural networks to break apart the input sketch and transform it. Interestingly, it does not as far as I can tell, use a Transformer architecture, which makes it a first. It is still a really useful tool however if you’re a 3D artist.

They have a free demo up on hugging spaces, which is well worth checking out

And last but not least for a bit of fun, there’s also CLIPasso. Its a team of researchers at Swiss Federal Institute of Technology (EPFL), Tel Aviv University, and Reichman University who managed to do the opposite of Stable Diffusion’s img 2 img function: Take an image and turn it into a sketch again

They do this by essentially using the intermediate layers of a pretrained CLIP model to constrain the geometry of the output sketch, together with a rasterizer to produce the resulting clip. It’s a pretty fun tool, and the demo is worth playing with. It’s a reminder that not all AI research has to be serious.

Now, with stable diffusion’s sketch to proper image functionality, and the sheer amount of interesting sketch to something tools coming out, it won’t be long before a simple sketch will be enough for a whole industrial process. In 2084, we could see that a sketch on the side of a teatowel could be immediately transformed into a 3D printed device, or even a building!